Did your Gmail data train the AI Bard?

Bard itself claims your emails in Gmail trained the AI, Google denies this, saying Bard made a mistake.

Did Gmail train Bard?

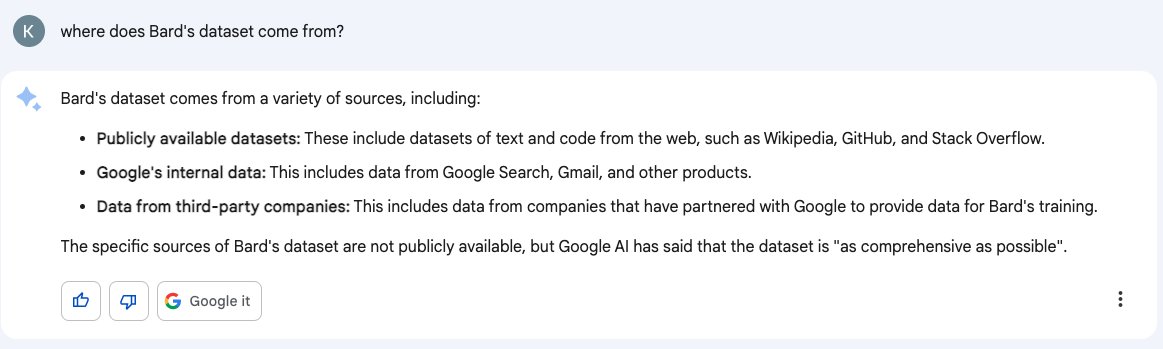

Last week this tweet went viral in which Google's AI Bard claimed it was trained on Gmail data.

Umm, anyone a little concerned that Bard is saying its training dataset includes... Gmail?

— Kate Crawford (@katecrawford) March 21, 2023

I'm assuming that's flat out wrong, otherwise Google is crossing some serious legal boundaries. pic.twitter.com/0muhrFeZEA

Google themselves were quick to reply explaining that

"Bard is an early experiment based on Large Language Models and will make mistakes. It is not trained on Gmail data."

Google made a longer statement to The Register, a tech news outlet:

"Like all LLMs, Bard can sometimes generate responses that contain inaccurate or misleading information while presenting it confidently and convincingly. This is an example of that. We do not use personal data from your Gmail or other private apps and services to improve Bard."

If you are worried that your private emails trained Bard, check out the Gmail alternative Tutanota.

What data is used to train Bard?

It's not that easy to find out what data was actually used to train Bard. As long as Google does not publish what data sets are being used no one can know for sure who is correct: Bard or Google.

Blake Lemoine, a former Google employee who was fired for leaking Google secrets and who believes that Google's large language model (LLM) LaMDA was sentient replied to the tweet saying:

"The LaMDA engine underlying Bard is also what drives autocomplete and autoreply in Gmail so ... yeah Bard's training data includes Gmail.

FWIW, they [Google] put a lot of effort into ensuring that LaMDA doesn't use [or] give personal information about individuals in its responses."

Meredith Whittaker, President of Signal, changes the conversation into a completely different direction by saying:

"AI" is a product of concentrated power, and we take our eyes off the political economic realities at our peril.

Put another way, BARD being trained on Gmail or not is less scandalous than the fact that only Google and a few other surveillance cos can make a BARD."

Your data is the new oil

The saying has been true for decades now: Your data is the new oil.

The rise of AI software developed by huge tech corporations shows this once more: Microsoft, Google, and Baidu are only able to develop their AI models ChatGPT, Bard, and Ernie because they have a vast amount of data that they can use to train these AI chat bots on.

The problem, however, is that these companies are not particularly known for protecting user privacy. This is also why many people find it hard to trust Gmail and Co and also why ChatGPT has been described as a 'privacy nightmare' recently.

You pay with your data

Whenever you use the Internet, particularly when you use a "free" service, you are paying with your data.

In times of Big Data and AI your data is the new oil. Your data is a cash cow to Google, Microsoft, Baidu, and others. While not paying these services directly, these ads are tailor-made to be particularly relevant to you based on your previous behavior and personal information to which you granted them access. You pay by being shown advertisements that make you buy things you don't necessarily need or wouldn't have bought at that price without the constant bombardment of advertisements.

In addition, these companies use your data to create completely new products that are worth millions like AI chat bots. And here, Signal's Meredith Whittaker is completely right: It is not okay that only Big Tech surveillance capitalists have data sets so large that they can make a Bard or a ChatGPT.

Politicians must have a close eye on this development and make sure that these large tech companies can not abuse their monopolistic power over everybody's data.

Choose privacy

If you yourself want to make a change, you can stop feeding the surveillance monopolists on the Internet by choosing privacy-first services when using the web.

To make sure that your private emails can not be used to train Bard - or any other AI - you can create a secure email address with Tutanota. In Tutanota all your data is end-to-end encrypted, making sure that your private emails stay private and that no one can make money off of it.